Prior knowledge about class similarity: We learn embeddings from training tasks that allow us to easily separate unseen classes with few examples. In: Advances in Neural Information Processing Systems, pp.In part I of this tutorial we argued that few-shot learning can be made tractable by incorporating prior knowledge, and that this prior knowledge can be divided into three groups: Vuorio, R., Sun, S.H., Hu, H., Lim, J.J.: Multimodal model-agnostic meta-learning via task-aware modulation. Tan, C.W., Bergmeir, C., Petitjean, F., Webb, G.I.: Time series extrinsic regression. Talagala, T.S., Hyndman, R.J., Athanasopoulos, G., et al.: Meta-learning how to forecast time series. In: Advances in Neural Information Processing Systems, pp. Snell, J., Swersky, K., Zemel, R.: Prototypical networks for few-shot learning. In: International Conference on Machine Learning, pp. Santoro, A., Bartunov, S., Botvinick, M., Wierstra, D., Lillicrap, T.: Meta-learning with memory-augmented neural networks. In: International Conference on Learning Representations (ICLR) (2017) Ravi, S., Larochelle, H.: Optimization as a model for few-shot learning. In: Advances in Neural Information Processing Systems, vol. Rajendran, J., Irpan, A., Jang, E.: Meta-learning requires meta-augmentation. Purushotham, S., Carvalho, W., Nilanon, T., Liu, Y.: Variational recurrent adversarial deep domain adaptation. Perez, E., Strub, F., de Vries, H., Dumoulin, V., Courville, A.C.: Film: visual reasoning with a general conditioning layer. Oreshkin, B.N., López, P.R., Lacoste, A.: TADAM: task dependent adaptive metric for improved few-shot learning. In: International Conference on Learning Representations (2020) Oreshkin, B.N., Carpov, D., Chapados, N., Bengio, Y.: N-BEATS: neural basis expansion analysis for interpretable time series forecasting. Oreshkin, B.N., Carpov, D., Chapados, N., Bengio, Y.: Meta-learning framework with applications to zero-shot time-series forecasting. Nichol, A., Schulman, J.: Reptile: a scalable metalearning algorithm, vol. In: Proceedings of the 7th ACM IKDD CoDS and 25th COMAD, pp.

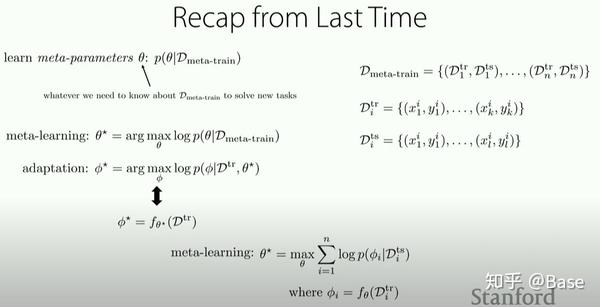

Narwariya, J., Malhotra, P., Vig, L., Shroff, G., Vishnu, T.: Meta-learning for few-shot time series classification. Lemke, C., Gabrys, B.: Meta-learning for time series forecasting and forecast combination. Jiang, X., et al.: Attentive task-agnostic meta-learning for few-shot text classification (2019) Iwata, T., Kumagai, A.: Few-shot learning for time-series forecasting. Hochreiter, S., Schmidhuber, J.: Long short-term memory. Ganin, Y., et al.: Domain-adversarial training of neural networks. arXiv preprint arXiv:1412.6581 (2014)įinn, C., Abbeel, P., Levine, S.: Model-agnostic meta-learning for fast adaptation of deep networks. 2980–2988 (2015)įabius, O., van Amersfoort, J.R.: Variational recurrent auto-encoders. KeywordsĬhung, J., Kastner, K., Dinh, L., Goel, K., Courville, A.C., Bengio, Y.: A recurrent latent variable model for sequential data. We show empirically that our proposed meta-learning method learns TSR with few data fast and outperforms the baselines in 9 of 12 experiments. Finally, we apply the data to time series of different domains, such as pollution measurements, heart-rate sensors, and electrical battery data. Moreover, based on prior work on multimodal MAML, we propose a method for conditioning parameters of the model through an auxiliary network that encodes global information of the time series to extract meta-features. In this paper, we will explore the idea of using meta-learning for quickly adapting model parameters to new short-history time series by modifying the original idea of Model Agnostic Meta-Learning (MAML).

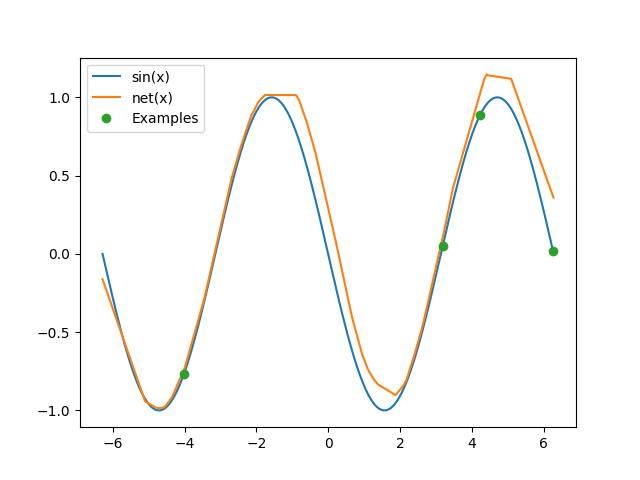

Therefore, it is important to make use of information across time series to improve learning. These models sometimes need a lot of data to be able to generalize, yet the time series are sometimes not long enough to be able to learn patterns. Recent work has shown the efficiency of deep learning models such as Fully Convolutional Networks (FCN) or Recurrent Neural Networks (RNN) to deal with Time Series Regression (TSR) problems.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed